I've used Claude Code daily since it came out. Here are the best practices, tools, and configuration patterns I've picked up. Most of this applies to other coding agents (Codex, Gemini CLI) too.

TL;DR

My configs, plugins, and skills for Claude Code:

https://github.com/vinta/hal-9000

CLAUDE.md

The Global CLAUDE.md

Your ~/.claude/CLAUDE.md should only contain:

- Your preferences and nudges to correct agent behaviors

- You probably don't need to tell it YAGNI or KISS. They're already built in

Pro tip: before adding something to CLAUDE.md, ask it, "Is this already covered in your system prompt?"

Here are some parts of my CLAUDE.md I found useful:

<prefer_online_sources>

Your training data goes stale. Config keys get renamed, APIs get deprecated, CLI flags change between versions. When you guess instead of checking, the user wastes time debugging your confident-but-wrong output. This has happened repeatedly.

Look things up with the find-docs skill or WebSearch BEFORE writing code or config. This applies even when you feel confident about the answer. Always look up:

- Config file keys, flags, syntax, and environment variables for any tool

- Library/framework API calls, module paths, and parameter names

- CLI flags and subcommands

- Dependency versions

- Best practices and recommended patterns

- Assertions about external tool behavior, even when confident

The cost of a lookup is seconds. The cost of a wrong config key is a failed run plus a debugging round-trip.

</prefer_online_sources>

<auto_commit if="you have completed the user's requested change">

Use the commit skill to commit, always passing a brief description of what changed (e.g. /commit add login endpoint). Don't batch unrelated changes into one commit.

</auto_commit>

Also see:

The Project CLAUDE.md

For project-specific instructions, put them in the project-level CLAUDE.md.

The highest-signal content in your project CLAUDE.md (or any skill) is the Gotchas section. Build these from the failure points Claude Code actually runs into.

Also see:

Per File Type Rules

For language-specific or per-file rules, put them in ~/.claude/rules/, so Claude Code only loads them when editing those file types.

For instance, ~/.claude/rules/typescript-javascript.md:

---

paths:

- "**/*.ts"

- "**/*.tsx"

- "**/*.js"

- "**/*.jsx"

- "docs/**/*.md"

---

# TypeScript / JavaScript

- Before adding a dependency, search npm or the web for the latest version

- Pin exact dependency versions in package.json — no ^ or ~ prefixes

- Use node: prefix for Node.js built-in modules (e.g., node:fs, node:path)

- Use const by default, let when reassignment is needed, never var

- Prefer async/await over .then() chains

- Use template literals over string concatenation

- Use optional chaining (?.) and nullish coalescing (??) over manual checks

- Never use as any or unknown. Always write proper types/interfaces. Only use any or unknown as a last resort when no typed alternative exists

- Prefer interface over type for object shapes (extendable, better error messages)

- Avoid enums. Use union types (type Status = 'active' | 'inactive') or as const objects

- Don't prefix interfaces with I or type aliases with T (e.g., User not IUser)

- Mark properties and parameters readonly when they should not be mutated

- Do not add explicit return types. Let TypeScript infer them

<verify_with_browser if="you completed a frontend change (UI component, page, client-side behavior)" only_if="playwright-cli skill is installed in project or user scope">

After implementing frontend changes, use the playwright-cli skill to visually verify the result in a real browser. Check layout, responsiveness, and interactive behavior rather than assuming correctness from code alone.

</verify_with_browser>

The full rules I have:

Configurations

Settings

There are some useful configurations you could set in your ~/.claude/settings.json:

{

"env": {

"CLAUDE_CODE_BASH_MAINTAIN_PROJECT_WORKING_DIR": "1",

"CLAUDE_CODE_DISABLE_ADAPTIVE_THINKING": "1",

"CLAUDE_CODE_EFFORT_LEVEL": "max",

"CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS": "1",

"CLAUDE_CODE_NEW_INIT": "1",

"CLAUDE_CODE_NO_FLICKER": "1",

"ENABLE_CLAUDEAI_MCP_SERVERS": "false",

"USE_BUILTIN_RIPGREP": "0"

},

"permissions": {

"allow": ["..."],

"deny": ["..."],

"ask": ["..."],

"defaultMode": "auto",

"additionalDirectories": [

"~/Projects"

]

},

"cleanupPeriodDays": 365,

"showThinkingSummaries": true,

"showClearContextOnPlanAccept": true,

"voiceEnabled": true

}

Highlights:

"CLAUDE_CODE_DISABLE_ADAPTIVE_THINKING": "1": To mitigate the Claude Code Degradation issue"CLAUDE_CODE_EFFORT_LEVEL": "max": Same as above"CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS": "1": Enable Agent Team feature, a fancy way to consume a huge amount of tokens"permissions.defaultMode": "auto": We use this to pretend it's safer than --dangerously-skip-permissions"cleanupPeriodDays": 365: By default, your chat history (location: ~/.claude/projects/) will be deleted after 30 days. If you want to keep them, set a higher number"voiceEnabled": true: Enable Voice Dictation feature. Code like a boss!

The full settings I use:

Permissions

If you're not using a sandbox or devcontainer for Claude Code, you may want to block some evil commands in your ~/.claude/settings.json:

{

"permissions": {

"deny": [

"Read(~/.aws/**)",

"Read(~/.config/**)",

"Read(~/.docker/**)",

"Read(~/.dropbox/**)",

"Read(~/.gnupg/**)",

"Read(~/.gsutil/**)",

"Read(~/.kube/**)",

"Read(~/.npmrc)",

"Read(~/.orbstack/**)",

"Read(~/.pypirc)",

"Read(~/.ssh/**)",

"Read(~/*history*)",

"Read(~/**/*credential*)",

"Read(~/Library/**)",

"Write(~/Library/**)",

"Edit(~/Library/**)",

"Read(~/Dropbox/**)",

"Write(~/Dropbox/**)",

"Edit(~/Dropbox/**)",

"Read(//etc/**)",

"Write(//etc/**)",

"Edit(//etc/**)",

"Bash(su *)",

"Bash(sudo *)",

"Bash(passwd *)",

"Bash(env *)",

"Bash(printenv *)",

"Bash(history *)",

"Bash(fc *)",

"Bash(eval *)",

"Bash(exec *)",

"Bash(rsync *)",

"Bash(sftp *)",

"Bash(telnet *)",

"Bash(socat *)",

"Bash(nc *)",

"Bash(ncat *)",

"Bash(netcat *)",

"Bash(nmap *)",

"Bash(kill *)",

"Bash(killall *)",

"Bash(pkill *)",

"Bash(chmod *)",

"Bash(chown *)",

"Bash(chflags *)",

"Bash(xattr *)",

"Bash(diskutil *)",

"Bash(mkfs *)",

"Bash(security *)",

"Bash(defaults *)",

"Bash(launchctl *)",

"Bash(osascript *)",

"Bash(dscl *)",

"Bash(networksetup *)",

"Bash(scutil *)",

"Bash(systemsetup *)",

"Bash(pmset *)",

"Bash(crontab *)"

],

"ask": [

"Bash(curl *)",

"Bash(wget *)",

"Bash(open *)",

"Bash(*install*)",

"Bash(uv add *)",

"Bash(bun add *)",

"Bash(git push *)",

]

},

"hooks": {

"PreToolUse": [

{

"matcher": "Bash",

"hooks": [

{

"type": "command",

"command": "python3 ~/.claude/hooks/guard-bash-paths.py"

}

]

}

]

}

}

However, "deny": ["Read(~/.aws/**)", "Read(~/.kube/**)", ...] alone is not enough, since Claude Code can still read sensitive files through the Bash tool. You can write a simple hook to intercept Bash commands that access blocked files, like this guard-bash-paths.py hook.

Though, Claude Code can still write a one-time script to read sensitive data and bypass all of the above defenses. So the safest approach is using sandbox after all.

Also see:

Plugins

Claude Code Plugins are simply a way to package skills, commands, agents, hooks, and MCP servers. Distributing them as a plugin has the following advantages:

- Auto update (versioned releases)

- Auto hooks configuration (users don't need to edit their

~/.claude/settings.json manually)

- Skills have a

/plugin-name:your-skill-name prefix (no more conflicts)

To install a plugin, you need to add a marketplace first. A marketplace is usually just a GitHub repo. Think of it as a namespace.

claude plugin marketplace add anthropics/claude-plugins-official

claude plugin marketplace add openai/codex-plugin-cc

claude plugin marketplace add slavingia/skills

claude plugin marketplace add trailofbits/skills

claude plugin marketplace add vinta/hal-9000

# then enter Claude Code to browse plugins

/plugin

Here are plugins I use:

Skills

Skills can contain executable scripts and hooks, not just Markdown. Use with caution! When in doubt, have your agent review them first.

Here are skills I use, mostly installed per project when needed:

# my skills

npx skills add https://github.com/vinta/hal-9000 --skill commit magi second-opinions -g

npx skills add https://github.com/vinta/dear-ai

# writing skills

npx skills add https://github.com/softaworks/agent-toolkit --skill writing-clearly-and-concisely humanizer naming-analyzer

npx skills add https://github.com/hardikpandya/stop-slop

npx skills add https://github.com/shyuan/writing-humanizer

# doc skills

npx skills add https://github.com/upstash/context7 --skill find-docs -g

# backend skills

npx skills add https://github.com/trailofbits/skills --skill modern-python

npx skills add https://github.com/vintasoftware/django-ai-plugins

npx skills add https://github.com/supabase/agent-skills

npx skills add https://github.com/planetscale/database-skills

npx skills add https://github.com/cloudflare/skills

# frontend skills

npx skills add https://github.com/vercel-labs/agent-skills

npx skills add https://github.com/vercel-labs/next-skills

# design skills

npx skills add https://github.com/openai/skills --skill frontend-skill

npx skills add https://github.com/pbakaus/impeccable

npx skills add https://github.com/nextlevelbuilder/ui-ux-pro-max-skill

# video skills

npx skills add https://github.com/remotion-dev/skills

# browser skills

npx skills add https://github.com/vercel-labs/agent-browser

npx skills add https://github.com/microsoft/playwright-cli

npx skills list -g

npx skills update -g

npx skills remove --all -g

Highlights:

/brainstorming from superpowers: When in doubt, start with this skill/writing-skills from superpowers: Use this skill to improve your skills/skill-creator from claude-plugins-official: Use this skill to evaluate your skills/find-docs from context7: Find the latest documentations/frontend-design from impeccable: The better version of the official /frontend-design skill/simplify: Run it often, you will like it/insights: Analyze your Claude Code sessions

You can find more skills on skills.sh.

MCP Servers

You probably don't need any MCP servers if you can do the same thing with CLI + skills.

Context7 MCP

No, just use the ctx7 CLI with find-docs skill instead.

npx ctx7 setup

Playwright MCP

No, you should use the playwright-cli or agent-browser skill instead. Both tools support headed mode (the opposite of headless), if you'd like to see the browser.

npm install -g @playwright/cli@latest

npx skills add https://github.com/microsoft/playwright-cli

npm install -g agent-browser

agent-browser install

npx skills add https://github.com/vercel-labs/agent-browser

GitHub MCP

No, you should use the gh command instead.

brew install gh

Trail of Bits' gh-cli plugin is also worth a look, though you should check how it uses hooks to intercept GitHub fetch requests. Quite controversial for a security company.

Codex MCP

Yes, ironically. Other coding agents like Claude Code can use Codex via MCP, which is slightly more stable than directly invoking it with codex exec via CLI.

# Codex reads your local .codex/config.toml by default

claude mcp add codex --scope user -- codex mcp-server

# You can still override some configs

claude mcp add codex --scope user -- codex -m gpt-5.3-codex-spark -c model_reasoning_effort="high" mcp-server

However, since OpenAI releases the official Claude Code plugin: codex-plugin-cc, you should probably use that instead.

Some Other Tips

Prompt Best Practices

Command Aliases

# in ~/.zshrc

alias cc="claude --enable-auto-mode --teammate-mode tmux"

alias ccc="claude --enable-auto-mode --continue --teammate-mode tmux"

alias cct='tmux -CC new-session -s "claude-$(date +%s)" claude --enable-auto-mode --teammate-mode tmux'

alias ccy="claude --teammate-mode tmux --dangerously-skip-permissions"

ccp() { claude --no-chrome --no-session-persistence -p "$*"; }

Use ccp for ad-hoc prompts:

ccp "commit"

ccp "list all .md in this repo"

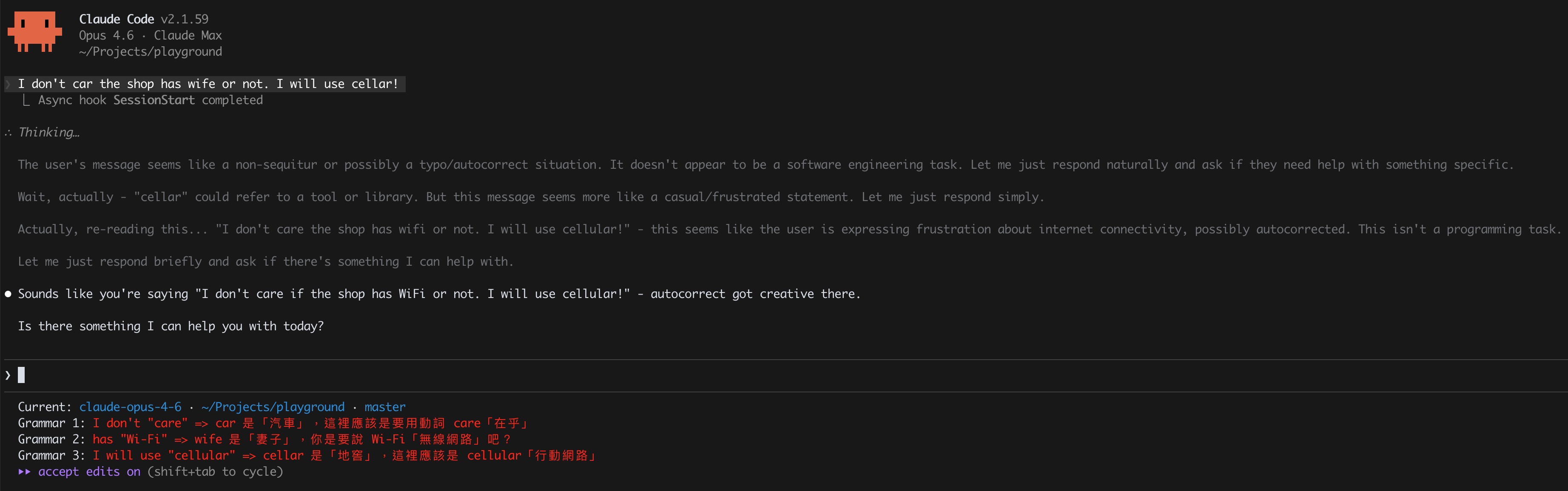

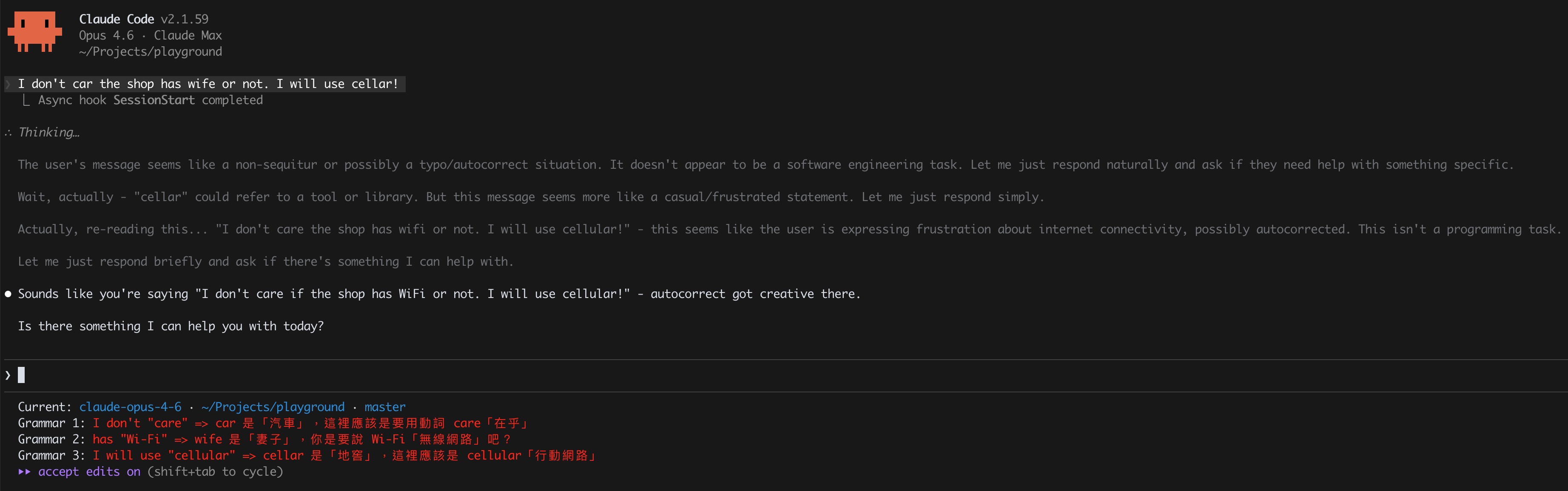

Customize Your Statusline

Claude Code has a customizable statusline at the bottom of the terminal. You can run any script that outputs text.

Mine shows the current model, the current working folder, the git branch, and a grammar-corrected version of my last prompt (because my English needs all the help it can get). The grammar correction runs an ad-hoc claude command inside the statusline script.

Run Ad-Hoc Claude Commands Inside Scripts

You can invoke claude as a one-shot CLI tool from hooks, statusline scripts, CI, or anywhere else. The trick is using the right flags to get a clean, isolated call with zero side effects:

cmd = """

claude

--model haiku

--max-turns 1

--setting-sources ""

--tools ""

--disable-slash-commands

--no-session-persistence

--no-chrome

--print

"""

result = subprocess.run(

[*shlex.split(cmd), your_prompt],

capture_output=True,

text=True,

timeout=15,

cwd="/tmp",

)

What each flag does:

--setting-sources "": don't load hooks (avoids infinite recursion if called from a hook)--no-session-persistence and cwd="/tmp": avoid polluting your current context--tools "": no file access, no bash, pure text in/out--no-chrome: skip the Chrome integration

Multi-Model Second Opinions

You can get independent code reviews or brainstorming input from other model families (Codex, Gemini) without leaving Claude Code. I have two skills for this:

- magi: Evangelion's MAGI system as a brainstorming panel. Three personas (Scientist/Opus, Mother/Codex, Woman/Gemini) deliberate in parallel

- second-opinions: Asks Codex and/or Gemini to review code, plans, or docs, then synthesizes their feedback

This works because each model family has different training biases. Claude might miss something Codex catches, and vice versa. It's especially useful for architecture decisions and "what should I build next" brainstorming.